The impact of artificial intelligence on our daily lives is constantly growing, changing how we work, learn, and communicate. AI is evolving rapidly and brings many beneficial application opportunities, but we must not forget our fundamental rights, values, and security. The European Union has drafted the world’s first comprehensive AI law, which aims to encourage innovation while ensuring technology is used responsibly.

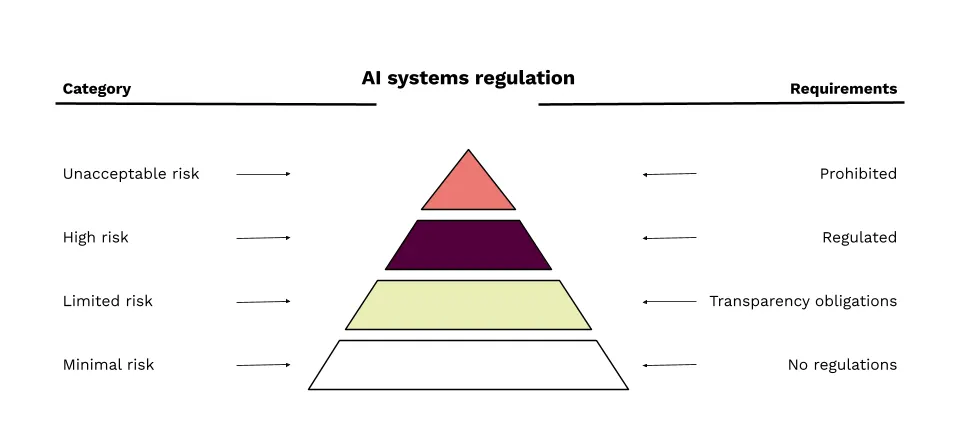

The AI Act primarily concerns developers and implementers of AI solutions. It is important for us to know what is permitted, what is regulated, and what is prohibited. For example, AI systems that could violate human rights or pose a threat (e.g., the social credit system used in China) are banned. High-risk AI systems (e.g., systems for evaluating job applicants) must undergo strict checks to ensure safety, reliability, and ethics.

Regulating AI systems takes into account their potential impact on society and individuals.

The EU divides AI systems into four risk-based categories.

The use of AI systems with unacceptable risk is prohibited

Systems with unacceptable risk pose a serious threat to human rights and society. Such AI solutions are banned. Here are some examples:

- Manipulation of people: For example, ads that try to steer children or the elderly toward making harmful decisions.

- Mass surveillance: Systems that constantly monitor people in public places using biometric data or collect info on movements and behavior.

- Social scoring: For example, systems that evaluate people based on their credit history or social behavior.

- Discriminatory algorithms: Systems that make unfair or biased decisions in hiring, lending, or other situations that can significantly impact lives.

- Decision making without human intervention: For example, medical AI solutions that make treatment decisions or diagnoses without a doctor’s involvement.

These requirements will apply 6 months after the EU legal act enters into force.

In certain cases, the use of AI systems with unacceptable risk is still permitted. For example, in situations where public interest outweighs the risks (searching for crime victims, missing persons, threat of terrorist attack, etc.).

High-risk AI systems are regulated

High-risk AI solutions have a significant impact on society and people and require strict regulation. Here are some examples of such systems:

- Medical diagnostic systems: AI systems that help diagnose diseases or conditions, e.g., by analyzing medical data and helping doctors choose treatment.

- Hiring algorithms: Systems that evaluate job seekers and help employers select candidates.

- Lending algorithms: Systems that decide on loan approval based on applicants’ credit history, income, and other financial data.

- Law enforcement AI systems: Systems used in law enforcement, e.g., for identification, surveillance, or risk assessment.

- Industrial control systems: Systems that manage and optimize industrial processes, e.g., in production lines or machinery control.

“When creating high-risk AI systems, an application must be made and requirements fulfilled.”

Creators of high-risk AI must:

- Create and implement a risk management system.

- Ensure high quality of datasets.

- Log activities to ensure traceability of results

- Prepare comprehensive technical documentation.

- Prepare clear instructions for the implementer.

- Ensure the possibility of human oversight.

- Design the system to achieve appropriate accuracy, resilience, and cybersecurity.

Transparency requirements apply to limited-risk systems

AI solutions with moderate impact interact with users automatically or recommend content, such as videos or courses, but their impact is moderate, and they are required to be transparent and inform users. For example:

- Chatbots and other interactive systems must be clearly labeled so users know they are interacting with AI.

- Content recommendation algorithms on web platforms and social media, where systems must show the basis on which recommendations are made.

No specific regulations are established for minimal-risk systems

AI systems that have a small impact on society and individuals are not subject to special regulations. Examples of such systems include:

- Email filtering systems that automatically move emails to the spam folder. The impact of this action on users is minimal.

- Simple computer games where the use of AI poses no significant threat and does not require strict regulation.

General Purpose AI models (GPAI)

General-purpose AI models, such as OpenAI’s ChatGPT and Google’s Gemini, must comply with specific regulations regardless of their risk level. They must provide technical documentation, user instructions, and follow the copyright directive. They must also publish a summary of training data to ensure transparency and responsible use of the solutions.

These requirements will apply 12 months after the EU legal act enters into force.

Summary

The EU AI Act is an important step toward the responsible use of artificial intelligence. It ensures that AI solutions meet specific requirements, including transparency, privacy, and the protection of human rights. Our company strictly follows these requirements to ensure the solutions we create are not only innovative but also secure and responsibly managed.

Sources

European Parliament, and Council of the European Union. “Regulation of the European Parliament and of the Council laying down harmonised rules on artificial intelligence (Artificial Intelligence Act).” 2024.

European Commission. “AI Act”